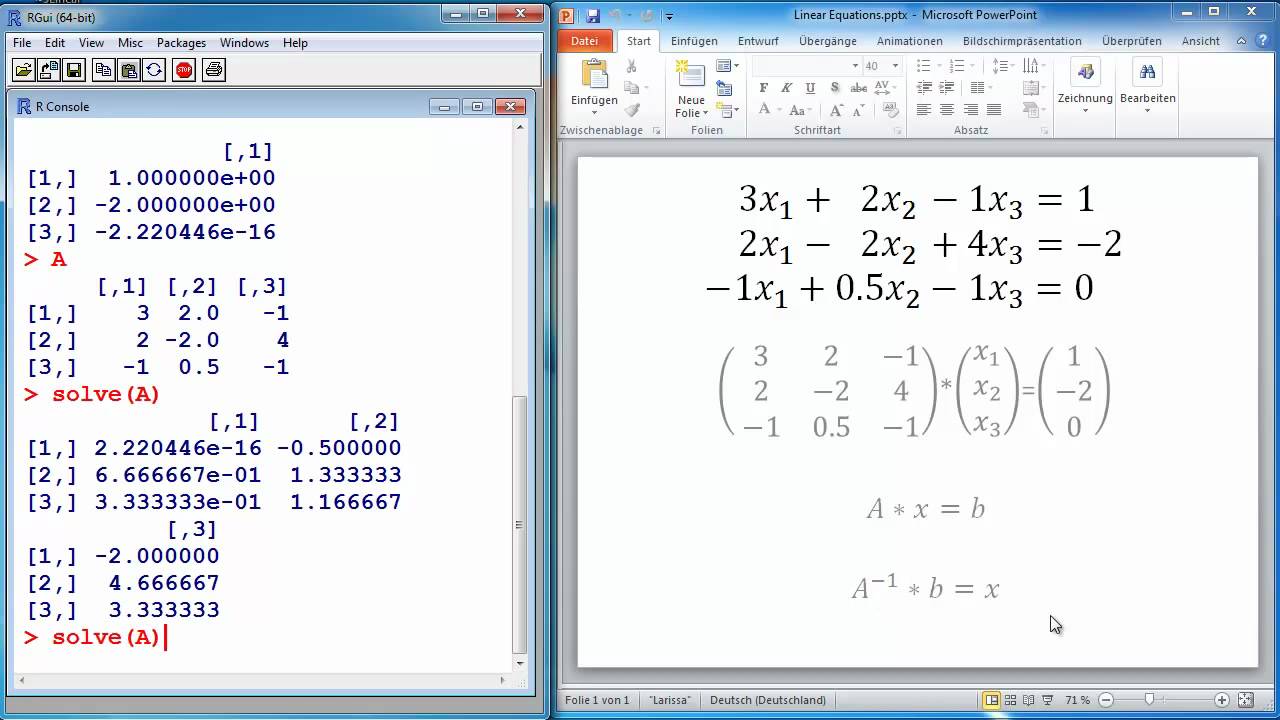

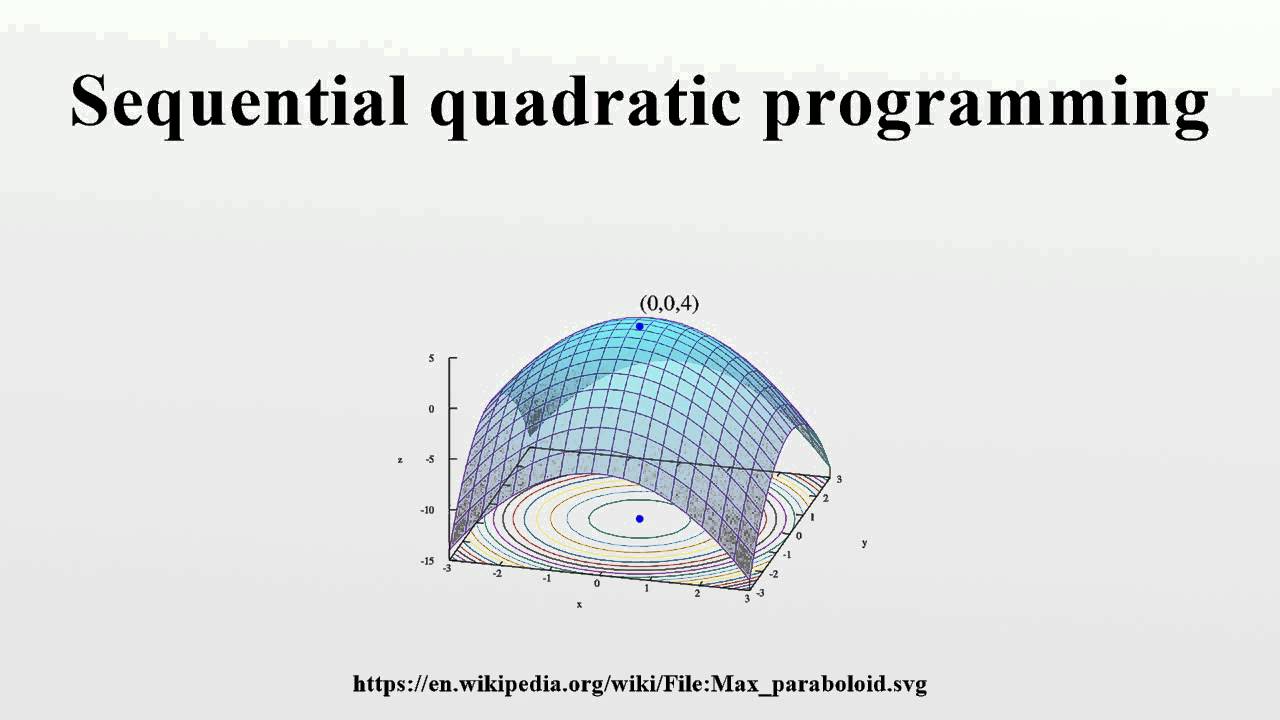

Since the QP subproblem is strictly convex, solving the KKT necessary conditions, if a solution exists, gives a global minimum point for the function. 13.6.1 Solving the KKT Necessary Conditions There are two basic approaches to solving the QP subproblem: The first one is to write the KKT necessary conditions and solve them for the minimum point the second one is to use a search method to solve for the minimum point directly.

#Quadratic programming update

Also, an explicit H may not be available because a limited-memory updating procedure may be used to update H and to calculate the product of H with a vector or the product of the inverse of H with a vector. It is noted that H is an approximation of the Hessian of the Lagrangian function for the constrained nonlinear programming problem. Note that a slightly different notation is used to define the constraints in this section to define the QP subproblem in order to present the numerical algorithms in a more compact form p is the number of equality constraints and m is the total number of constraints. Therefore, the QP subproblem is strictly convex, and if a solution exists, it is a global minimum for the objective function. It is also assumed that the Hessian H of q( d) is a constant and positive definite matrix. It is assumed that the columns of matrices N E and N I are linearly independent. Plt.scatter(X, X, c=y.ravel(), alpha=0.5, cmap= n × 1, d n × 1, e E p × 1, e I ( p − m ) × 1, H n × n, N E n × p, and N I n × ( m − p ). Let's convert the data to NumPy arrays, and plot the two classes. # Retain only 2 linearly separable classes Iris_df = pd.DataFrame(data= np.c_, iris], columns= iris + ) Let's start by loading the needed Python libraries, loading and sampling the data, and plotting it for visual inspection.

Now let's see how we can apply this in practice, using the modified Iris dataset. Let's look at a binary classification dataset \(\mathcal^N \alpha_i y_i = 0 \\ This is in stark contrast with the perceptron, where we have no guarantee about which separating hyperplane the perceptron will find. The intuition here is that a decision boundary that leaves a wider margin between the classes generalises better, which leads us to the key property of support vector machines - they construct a hyperplane in a such a way that the margin of separation between the two classes is maximised (Haykin, 2009). A classifier using the blue dotted line, however, will have no problem assigning the new observation to the correct class.

If we add a new “unseen” observation (red dot), which is clearly in the neighbourhood of class +1, a classifier using the red dotted line will misclassify it as the observation lies on the negative side of the decision boundary. The red line, however, is located too closely to the two clusters and such a decision boundary is unlikely to generalise well. Both the red and blue dotted lines fully separate the two classes.

Selecting the optimal decision boundary, however, is not a straightforward process. This selection results in a dataset that is clearly linearly separable, and it is straightforward to confirm that there exist infinitely many hyperplanes that separate the two classes. The sample contains all data points for two of the classes - Iris setosa (-1) and Iris versicolor (+1), and uses only two of the four original features - petal length and petal width. Figure 1 - There are infinitely many lines separating the two classes, but a good generalisation is achieved by the one that has the largest distance to the nearest data point of any class.įigure 1 shows a sample of Fisher’s Iris data set (Fisher, 1936).